The Dynamic 365 2019 Wave 2 Update is now GA. At Barhead we’ve been reviewing the new features in D365 For Marketing – here’s a quick look at one of them – the new A/B Testing functionality.

A/B testing addresses the business challenge of optimising your results by incrementally testing variations of the content. It’s a key tool in the marketers toolkit.

In the current version of D365fM it’s perfectly possible to construct an A/B test. But only manually: create the A & B versions of the email, add a splitter tile to the journey and add each email variant to a track. Go live, then manually evaluate the results to determine the winner and reconfigure the journey to use that version. Your journey would look something like this:

A/B Testing in D365fM – Pre 2019 Wave2

With Wave 2 Microsoft are introducing a much better A/B testing approach, with in-email A/B testing and automated winner detection and allocation. Build one version of your email, add your A/B testing variants, and when the winner emerges, all remaining sends automatically get this variant.

Here’s a quick scamper through how this works:

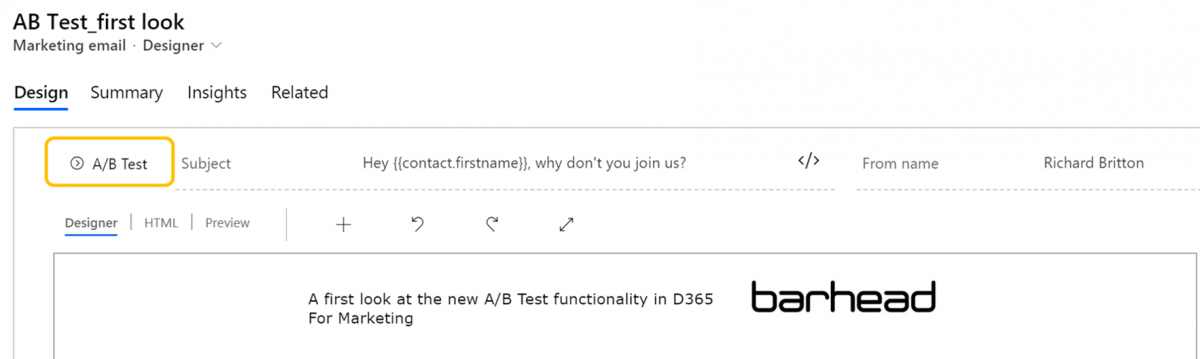

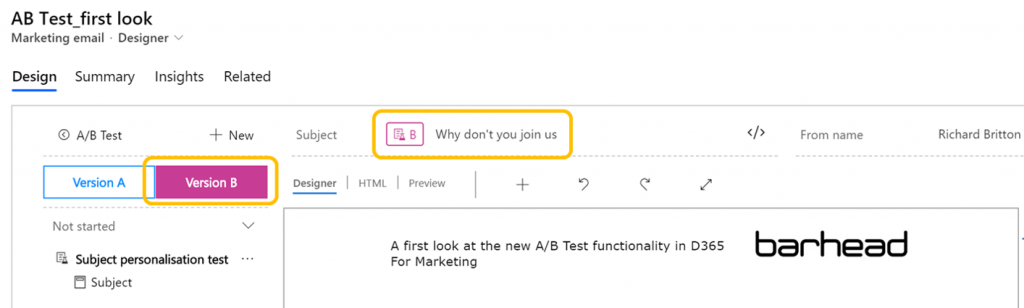

Create a new email and one of the first things you’ll see is an A/B test button on the email header ribbon:

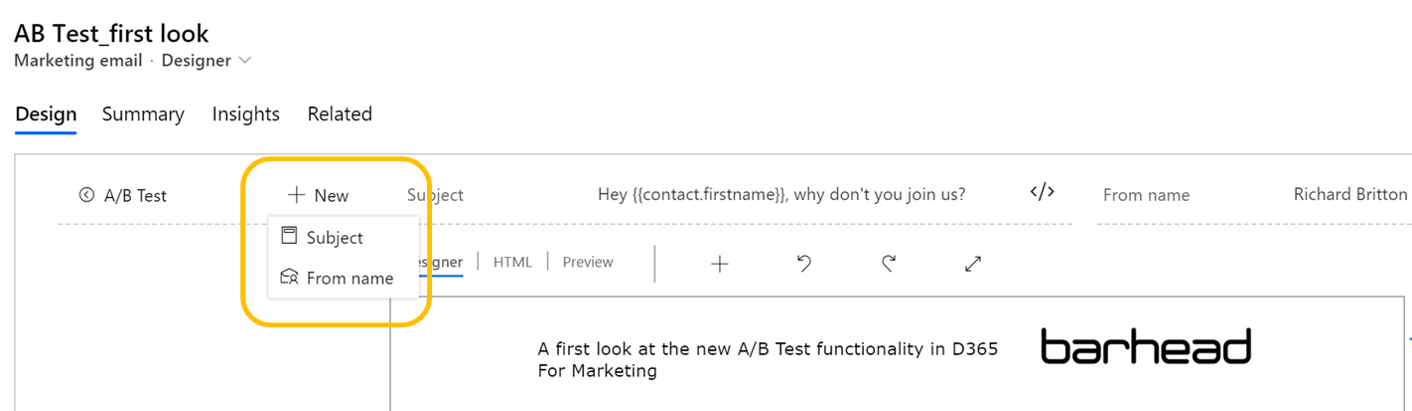

Expand this button, click +New and select from Subject line or Sender (‘From name’) tests.

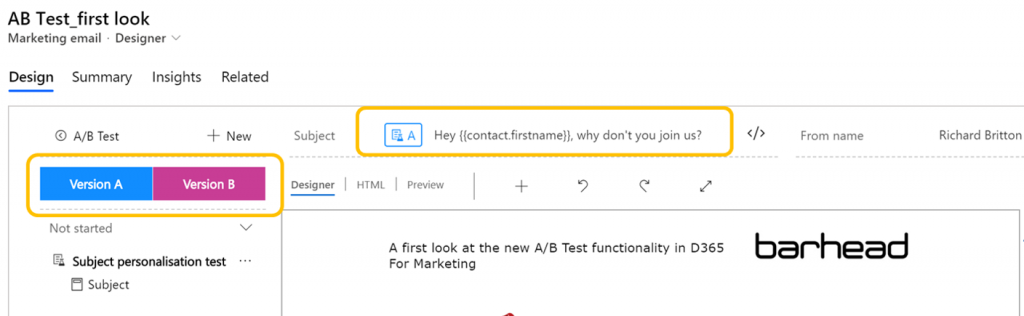

For this first look I’m just going to try a Subject line test – with and without personalisation

Adding the A& B variants is very straightforward. Here’s variant A with a personalised Subject line

And variant B with no personalisation

You can add more test variants – and then select which one to test in the Journey Designer.

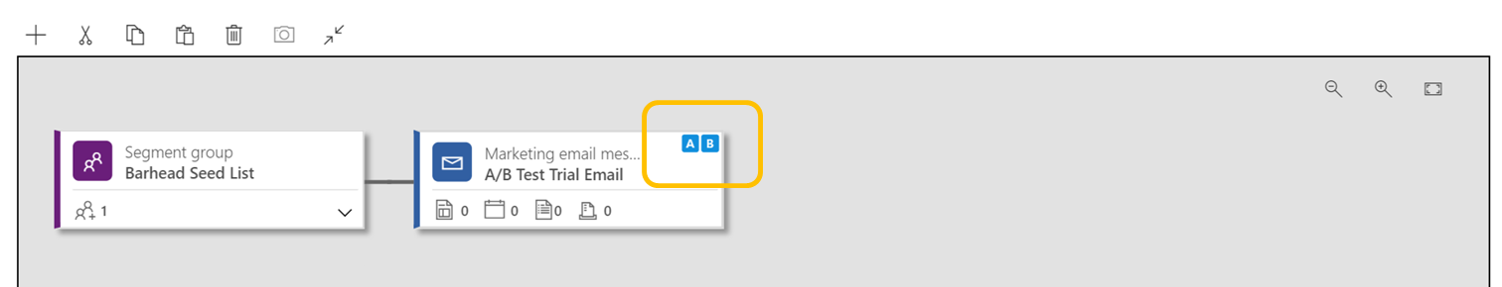

Test and go-live with this email, then head into the Journey Designer. Add your email that includes the A/B test and you’ll notice the email tile has small blue A/B icons in the top corner.

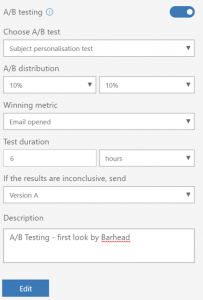

Activate the A/B testing slider, and select your test parameters. Key parameters include

Activate the A/B testing slider, and select your test parameters. Key parameters include

- Which test to execute (e.g. Subject personalisation test);

- The A/B test sample sizes (in this case 10% + 10%);

- The winning metric (e.g. email clicked);

- Test duration (in this case 6 hours)

- What to do if initial test is inconclusive

If you want to revisit the email, click on the Edit button.

When you’ve configured and tested the journey, go live and the email sends will start.

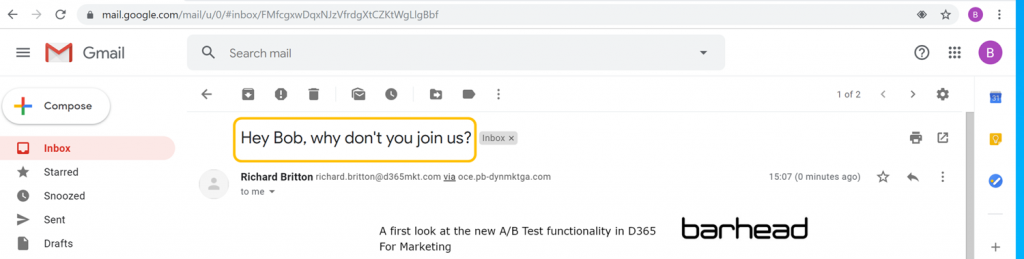

For this test I used a seed list of 11s. Here’s a sample of version A, showing the personalisation:

And here’s Version B:

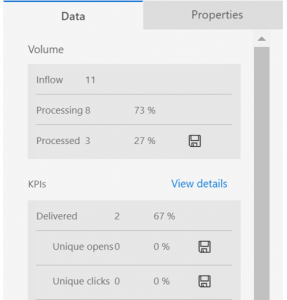

Once the emails start flowing, you can quickly view the KPIs – including the ‘winning’ variant. As you’ll see, of the 11 contacts in the seed list only 2 have sent (the 2 examples above, plus our hard bounce account in the seed list); the rest are on hold waiting for a winner (or the ‘test inconclusive’ time period to pass).

For this test, we opened & clicked only one of the emails, to confirm that the auto-selection of the winner would work. And work it did – just as expected. The remaining 7 emails received the winning version, six hours after the test went live.

For another review, check out this blog post by Jesper Osgaard, one of Microsoft leading SMEs on Dynamics 365 For Marketing.

In summary: admittedly a contrived test – but overall I’m impressed with both the user experience and also the outcomes. There are a few areas that I’m hoping will be addressed before GA in October – for example it’s not possible to see which version of the test a contact received – but overall, pretty impressive.

Dynamics 365 For Marketing

Dynamics 365 For Marketing is a richly-featured marketing automation application from Microsoft, natively integrated with Dynamics 365 Customer Engagement. Introduced in May 2019, it has already a strong worldwide user base and gains all the benefits of being an embedded part of the Microsoft Power Platform.

Core features & functionality include:

- Digital marketing. Journeys, segmentation, automation, forms, landing pages, website tracking – all available and easily configurable without reliance on IT or agencies

- Advanced segmentation & marketing lists. Create sophisticated segments and audiences for your campaign, seamlessly combining profile and interaction attributes

- Fully-featured events management platform. Run a conference, or a party, with full tracking of attendees

- Surveys. Embedded survey functionality, with responses written directly back you the CRM means no need for a separate survey tool.

- Turn-key application. Installed onto your existing D365 CE application(s), Dynamics 365 For Marketing works, out-of-the-box

- Highly configurable & extensible. Extend and enhance with Flow, PowerBI and PowerApps. Add connectors and integrations from the Microsoft AppSource. The options and opportunities are endless

Barhead Solutions Marketing Practice

Barhead a specialised consulting firm focused on delivering business solutions, leveraging Microsoft’s Technology Stack We believe that it is a combination of people, technology, and business drivers that underpin the most successful implementations.

The Marketing Solutions team at Barhead are a dedicated and specialised group, focussing on helping our clients deliver value from marketing technology. Our services span:

- Select & buy. We can help you find the most appropriate solution to your marketing requirements, across the Microsoft , Adobe and ISV portfolios;

- Implement, configure, integrate, extend. Systems integration is Barhead’s central competency – we have support full end-to-end implementations for many clients;

- Value realisation & customer success. Great marketing solutions start with great implementations, but need ongoing attention, support and guidance. We can provide ongoing advisory, upskilling & guidance to ensure our clients continue to realise full benefits from their solutions;

- Support. We can provide cost-effective operational support and managed services. From staff augmentation to full managed services, we can help.

Richard Britton

Richard leads marketing solutions team at Barhead. As a 20-year veteran in the marketing automation industry, Richard has led and/or supported many marketing technology projects. With a career that spans consultancy and client-side, Richard has a deep wealth of experience and expertise in all aspects of technology-based marketing.

Richard can be contacted at richard.britton@barhead.com. Alternatively you’ll find Richard on LinkedIn at linkedin.com/in/rabritton/ or on Twitter at @richardbritton