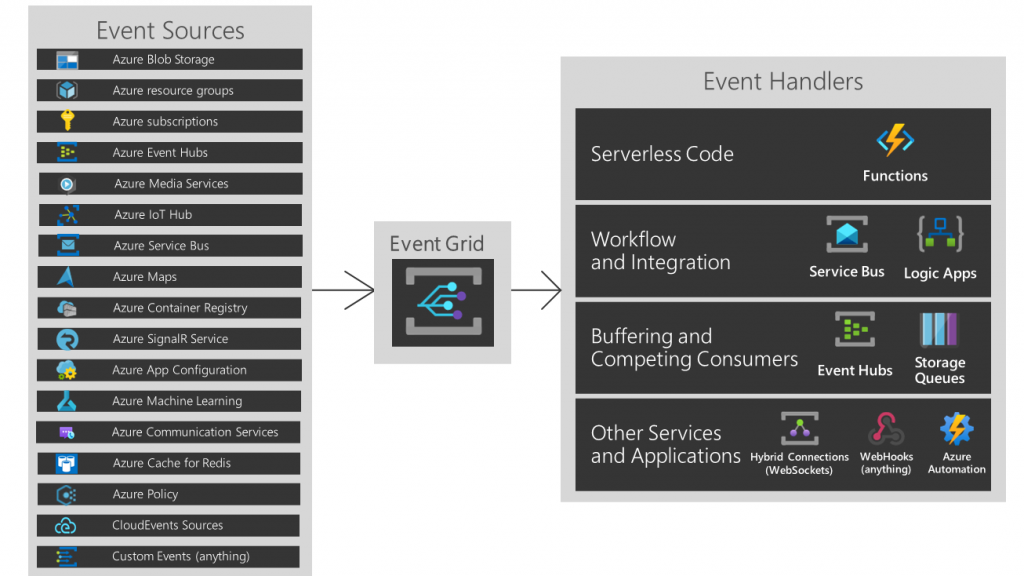

Event-driven architecture (EDA), as described in Wikipedia, is a software architecture paradigm promoting the production, detection, consumption of and reaction to events. An event can be defined as ‘a significant change in state’. In Dataverse’s terms, this could be anything from creation, update, deletion, etc. What EDA allows us to do is decouple the source and target systems from point-to-point integrations and processing, hence the source system (Event Publishers) just generates a message or event, which is picked up by middleware and notifies subscribers (Event handlers) of this event. Event Publishers are not concerned with who these event handlers are and how the Event handlers will process this event.

This pattern is useful in scenarios such as:

- Events handled by multiple systems

- Source and target systems that lack direct integration options

- Different teams that manage the development and maintenance of the source and target systems

- A scalable integration design that is required for future use and many more

In this blog, we will cover how we can use Dataverse and Azure Event Grid to implement an event-driven architecture, where Dataverse will be used as both Event Publisher and Event Handler.

Azure Event Grid is Microsoft Azure’s event routing and messaging service. It has native integration with lots of Azure Services and can connect these services as Event Publisher or Event Handler. A custom event publisher can be created in case the event publisher is not an Azure service.

Dataverse as Event Handler

In this scenario, we will create a record in a table in Dataverse when a file is uploaded in an Azure storage container.

An Azure storage is a pre-built event publisher (source) for Event Grid. An upload event is an out-of-the-box event for which we do not need to create a topic, so effectively, we are going to directly configure the subscribers here. Therefore, we will first create our Event Handler and then point to it through the Event Subscription method.

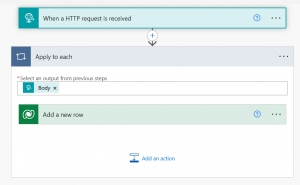

For demonstration purposes, we have kept it very simple. Below is an example Power Automate flow that acts as an event handler. It is listening for an HTTP trigger and creates a row in a Dataverse table.

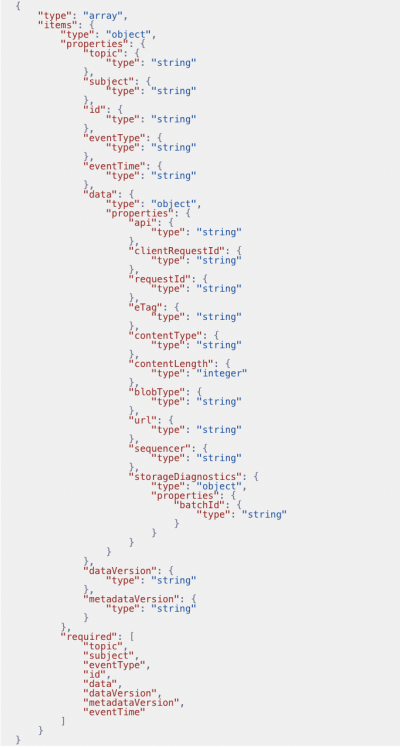

The HTTP trigger’s request body schema will be based on Event Grid’s event schema. The data object in the request is unique to each publisher while the rest of the schema remains the same.

For Azure Blob event publisher, the schema looks something like this, and this is what we have used in our example flow:

Once the flow is created, copy the HTTP trigger URL for future use.

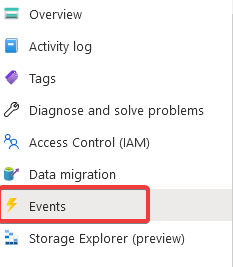

Now, we will directly go to the storage resource on Azure Portal and navigate to the Events section of that resource.

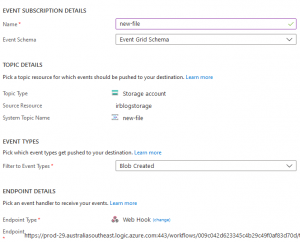

We will create a new Event Subscription, give it a name and select the event type. For this example, we are subscribing for Blob Created event, and the End Point type is WebHook with Power Automate HTTP trigger URL as the End Point.

Everything is good to go now. To test it, we can now upload a file in our storage container, and the event grid will route the message to the power automate flow.

Dataverse as Event Publisher

In this scenario, Dataverse acts as an event publisher. We will generate an event when a new record is created in a Dataverse table. This event is sent to the event grid as a custom topic, and the event grid will route this to an Azure Function, which will act as an event handler.

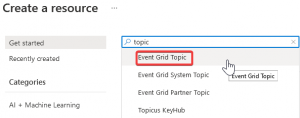

The first step in this scenario is to create an Event Grid topic. You do that in the Azure Portal.

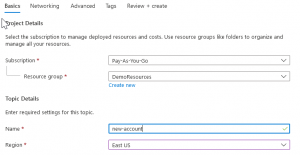

Give the topic a name and create it.

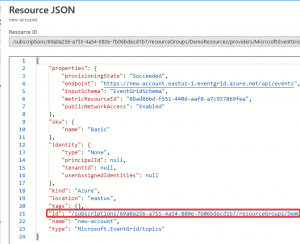

Once the topic is created, keep a note of Topic Endpoint.

Also, copy the ID from the Topic’s JSON view.

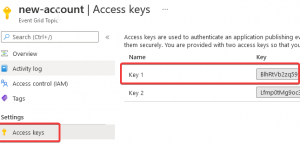

Finally, copy the access key from the Access Keys section.

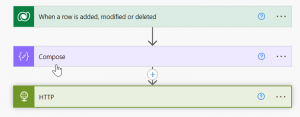

Now, our custom topic is ready to use. Next, we will create out Power Automate flow. The flow is simple in this example: it runs when a new account is created; it composes a message to be sent to Event Grid and then sends the message.

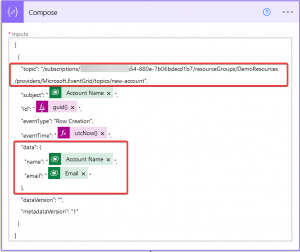

In the Compose action, the topic property is set to the ID we copied from the Topic’s JSON View, and the data object is what you want to send as part of your custom message. Please note this message follows the same Event Schema we used while receiving the message.

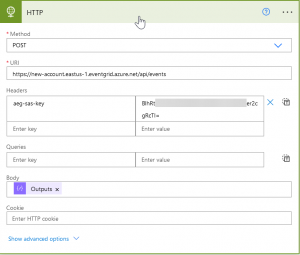

Finally, to send this to Event Grid, we have the HTTP action: the URL of the action is the Topic’s Endpoint, and in the header, we add a custom header aeg-sas-key—the value of it is what we copied from the access keys.

Now, our flow is ready. If a new account is created, it will send a message with some account details to the Azure Event Grid. To complete the process, we will use the Azure Function to act as the event handler.

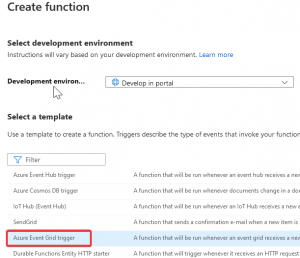

We will create a new Azure Event-Grid-triggered Azure Function.

Make sure the trigger is configured to be Event Grid Trigger.

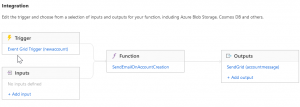

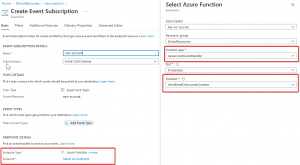

Once the Azure function is ready and you have added your logic, we will create an event subscription under our Event Grid Topic using the Endpoint type as Azure Function. Select the Azure Function we just created as the endpoint.

We are all good to go now!!!

About the Author: Irfan Rizvi

Irfan is an experienced Microsoft certified Technology Consultant and Solutions Architect. He has more than 20 years of industry experience and has worked with a variety of Microsoft solutions. His current focus is on the Microsoft Power Platform, and loves to keep himself updated with the tech industry.